Probabilistic Graphical Models

CSC 535 | Spring 2023 | MW 12:30 - 1:45 PM | Gould-Simpson 701

Description of Course

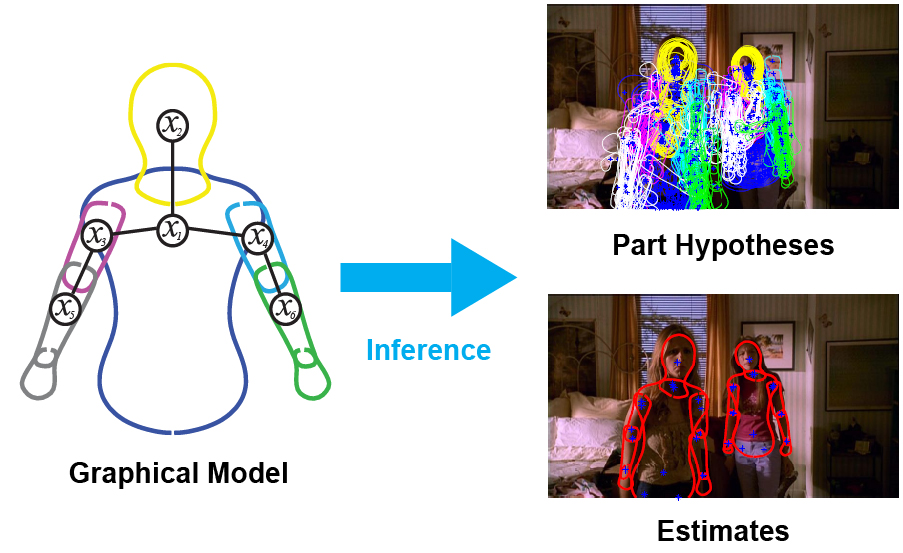

Probabilistic graphical modeling and inference is a powerful modern approach to representing the combined statistics of data and models, reasoning about the world in the face of uncertainty, and learning about it from data. It cleanly separates the notions of representation, reasoning, and learning. It provides a principled framework for combining multiple sources of information, such as prior knowledge about the world, with evidence about a particular case in observed data. This course will provide a solid introduction to the methodology and associated techniques, and show how they are applied in diverse domains ranging from computer vision to computational biology to computational neuroscience.

Textbooks

The following textbook will be used for reading assignments. An electronic copy is available via the UA library webpage (NetID login required):

Course Management

D2L: https://d2l.arizona.edu/d2l/home/1269568

Piazza: https://piazza.com/arizona/spring2023/csc535

Instructor and Contact Information:

Instructor: Jason Pacheco, GS 724, Email: pachecoj@cs.arizona.edu Office Hours: Fridays, 3-5pm (Zoom via D2L Calendar) Instructor Homepage: http://www.pachecoj.com

| Date | Topic | Readings | Assignment |

|---|---|---|---|

| 1/11 | Introduction + Course Overview (slides) |

W3Schools : Numpy Tutorial YouTube : Numpy Tutorial : Mr. P Solver |

|

| 1/16 | No Class: MLK Day | ||

| 1/18 | Probability and Statistics : Discrete Probability (slides) | CH 2.1 - 2.4 | HW1 (Due: 1/30) |

| 1/23 | Probability and Statistics : Continuous Probability (slides) | CH 2.4-2.7 | |

| 1/25 | Probability and Statistics : Bayesian Probability (slides) |

CH 3 Additional Resources YouTube: 3Blue1Brown : Bayes Rule Why Isn't Everyone a Bayesian? Efron, B. 1986 Objections to Bayesian Statistics Gelman, A. 2008 |

|

| 1/30 | Probabilistic Graphical Models (PGMs) : Intro (slides) | CH 10.1 - 10.5 | HW2 (Due: 2/8) |

| 2/1 | PGMs : Directed (slides) | CH 10.1 - 10.5 | |

| 2/6 | PGMs : Undirected (slides) | CH 19.1 - 19.4 | |

| 2/8 |

HW3 (Due: 2/27) Example factor-to-variable message (Jupyter Notebook) |

||

| 2/13 | Message Passing Inference (Sum-Product Belief Propagation) (slides) |

CH 20 Kschischang, et al. "Factor Graphs and the Sum-Product Algorithm |

|

| 2/15 | Message Passing (Loopy BP, Max-Product BP) (slides) |

CH 20 Kschischang, et al. "Factor Graphs and the Sum-Product Algorithm |

|

| 2/20 | Message Passing (Variable Elimination) (slides) | CH 20 | |

| 2/22 | Message Passing (Junction Tree) (slides) | CH 20 | |

| 2/27 | Parameter Learning / Expectation Maximization (slides) | CH 11 | |

| 3/1 | Expectation Maximization (Continued) (slides) | Midterm (Due: 3/3) | |

| 3/6 | No Class: Spring Recess | ||

| 3/8 | No Class: Spring Recess | ||

| 3/13 | Dynamical Systems (HMM, Baum-Welch Learning) (slides) | CH 17 | |

| 3/15 | Dynamical Systems (Linear Dynamical Systems, Kalman Filter) (slides) | CH 17 | HW4 (Due: 3/29) |

| 3/20 | Dynamical Systems (Nonlinear and Switching State-Space) (slides) | CH 18 | |

| 3/22 | CH 18 | ||

| 3/27 | Monte Carlo Methods (Rejection Sampling, Importance Sampling) (slides) | Sec. 23.1-23.4 | |

| 3/29 | Monte Carlo Methods (Rejection Sampling, Importance Sampling) (slides) | Sec. 23.1-23.4 | HW5 (Due: 4/17) |

| 4/3 | Monte Carlo Methods (Sequential Monte Carlo) (slides) | Sec. 23.5 | |

| 4/5 | Monte Carlo Methods (Sequential Monte Carlo) (slides) | Sec. 23.5 | |

| 4/10 | Markov Chain Monte Carlo (Metropolis-Hastings) (slides) |

Sec. 24.1-24.4 Neal. "Probabilistic Inference Using MCMC." 1993 Andrieu et al. "An Intro. to MCMC for ML." 2003 |

|

| 4/12 | Markov Chain Monte Carlo (Metropolis-Hastings) (slides) | Sec. 24.1-24.4 | |

| 4/17 | Markov Chain Monte Carlo (Gibbs Sampling) (slides) | HW6 (Due: 5/3) | |

| 4/19 | Exponential Family (slides) | Sec. 9.1-9.2 | |

| 4/24 | Exponential Family (slides) | Sec. 9.1-9.2 | |

| 4/26 | Variational Inference (Mean Field) (slides) | Sec. 21.1-21.7 | |

| 5/1 | Variational Inference (Mean Field) (slides) | Sec. 22.1-22.5 | |

| 5/3 | Course Wrapup (slides) |